.

.

The first of two feature columns on this subject.

Quantum computing may be just around the corner or it may be, for all practical purposes, permanently out of reach: the physics needed for a useful quantum computer has not yet been discovered, and may in fact not exist.

A quantum computer, real or potential, is essentially different from an adding machine. Whereas the dials in Pascal's A.D. 1645 brass calculator always line up to read out exactly one 6-digit number, the set of qubits in a quantum register exist in a superposition of states: when the register is interrogated one of these states is read out, with a definite probability, and the remaining information is lost. Because the register can simultaneously be "in" a huge number of states, a huge number of calculations may be carried out simultaneously. But the inputs to a quantum computer must be organized to take advantage of superposition, and the calculating process must force the probabilistic output to give useful information. This is the problem of programming a quantum computer, and in certain important and interesting cases it has been solved. One of the quantum computing algorithms, a factorization algorithm due to Peter Shor, will be the focus of these two columns.

Communication security today is almost universally ensured by the use of RSA encryption. This method relies on the inaccessibility of large prime factors of a large composite number. The problem is an artificial one: the encrypter takes two (or more) large primes and multiplies them. The decrypter tries to work backwards from the product to the factors. It is hard work. The largest number factored so far ( "RSA-640") had 193 decimal digits and took "approximately 30 2.2GHz-Opteron-CPU years," over five months of calendar time. At that rate a 1024-bit number, the size currently recommended by a commercial cryptology site, would take on the order of 10145 years ("bit" is short for "binary digit;" each additional bit contributes a factor of 2 to the size of the calculation). The site adds: "For more security or if you are paranoid, use 2048 or even 4096 bits."

A quantum computer of suitable size could factor these large numbers in a much shorter time. For a 1024-bit number, Shor's Algorithm requires on the order of 10243, about one billion, operations. I do not have any information on how quickly quantum operations can be executed, but if each one took one second our factorization would last 34 years. If a quantum computer could run at the speed of today's electronic computers (100 million instructions per second and up) then factorization of the 1024-bit number would be a matter of seconds.

A note on "suitable size." To run Shor's Algorithm on a 1024-bit number requires two quantum registers, one of 2048 qubits and one of 1024. These qubits all have to be "in coherence," so that the totality of their states behaves as a single, entangled state. (More about entanglement later). It was reported as an extraordinary technical feat when IBM scientists in 2001 constructed a coherent quantum register with 7 qubits and used it with Shor's Algorithm to factor 15.

This column and next month's will present a description of Shor's Factorization Algorithm in terms appropriate for a general mathematical audience. This month will cover the number-theoretical underpinning along with the Discrete and Fast Fourier Transforms. These convert factorization into a frequency-detection problem which is structured so that it can be adapted for quantum computation. The problem itself has a definite answer but takes exponential time to get there. Next month's column will address the more specifically quantum-computational aspects of the algorithm, including superposition and entanglement, leading up to Shor's ingenious quantum jiu-jitsu, which forces the quantum read-out, with high probability, to give a useful answer. That probability is high enough, and the running time on a suitable machine would be short enough, for the calculation to be repeated until the unknown factors are produced.

The light from a star can be split into a spectrum, where the characteristic frequencies of its elements can be detected. Analogously, a composite number N can be made to generate a spectrum, from which its factors can be calculated.

Choose a number a relatively prime to N, and make the list of integer powers of a modulo N: a, a2, a3, ... . If a and N are relatively prime, it follows from a theorem of Euler that this list will eventually include the number 1. (Euler's Theorem says specifically that if φ(N) is the number of positive integers less that N which are coprime to N then aφ(N) is congruent to 1 modulo N). Suppose this happens for an even power of a, say a2b = 1 mod N, i.e. a2b – 1 = 0 mod N. This means that (ab + 1) (ab – 1) is a multiple of N; if it is one times N we have our factors; otherwise the Euclidean Algorithm will speedily find a common factor of, say, ab + 1 and N.

These examples are trivially simple, but illustrate the phenomenon:

How do we get our hands on b? Just examining the terms of the sequence and waiting for 1 to show up takes too long, because the length of the list is commensurate with the number N we want to factor: it increases exponentially with the number, say n, of bits used to write N. But think of the stream of numbers a mod N, a2 mod N, a3 mod N, ... as the light emitted by N. If we can find the frequency, or equivalently the period, with which this sequence repeats itself, we can use the equivalence aj = ak <=> aj–k = 1 (modulo N), and find a factorization of N as above. We will see that on a quantum computer, this computation requires a number of steps increasing only as a polynomial in n. That is the key to quantum factorization.

Fourier transforms detect periodicity; we will find b using a quantum adaptation of the Fast Fourier Transform, a speeded-up version of the Discrete Fourier Transform, which itself arises from adapting to sequences the Fourier Series designed to work with continuous functions.

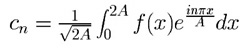

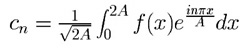

The (complex) Fourier coefficients of a real-valued function f(x) defined on [0,2A] are

.

.

Notes:

,

,

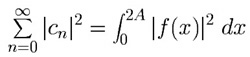

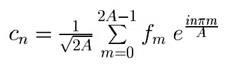

Suppose now we think of a sequence f = f0, f1, ... , f2A–1 (A now an integer), as the set of values at x = 0, x = 1, x = 2, ... , x = 2A–1 of a function f(x) defined on the interval [0,2A], and that we define a new cn by replacing the Fourier integral with a left hand sum with 2A equal subdivisions of length 1. This sum only involves the elements of f:

.

.

Suppose that f has period p, i.e. fm+p = fm for every value of m; the simplest case to analyze is when p is a divisor of 2A, say p = 2A/k, k an integer. In that case, if n is not a multiple of k (green boxes in the examples below) the periodic reappearances of a sequence item fm are multiplied by coefficients which cycle through a complete set of roots of 1, and thereby add up to zero. The explicit formula is:

So the only chance for a non-zero Fourier coefficient is when n is a multiple of k, (and then, up to a factor, c0, ck, c2k, ... , c(p–1)k are the 0th, 1st, 2nd, ... , (p–1)-st Fourier coefficients of the sequence f restricted to a single period).

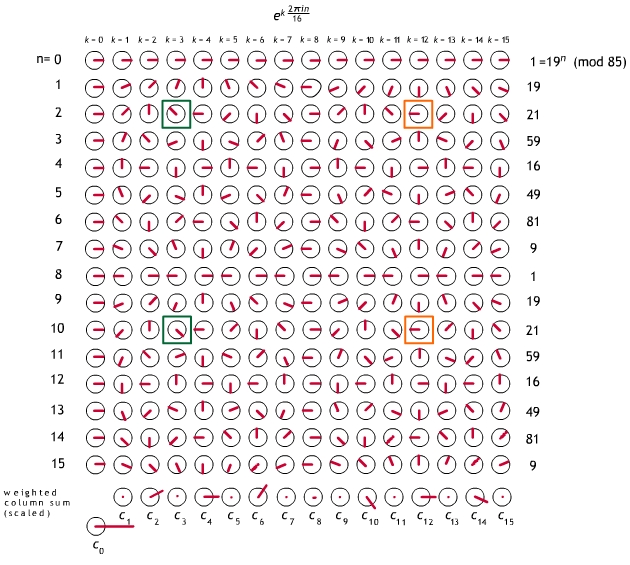

Fig. 1. Discrete Fourier

Transform analysis of the sequence given by the

first 16 powers of 19 (modulo 85), a sequence with period 8

and frequency 16/8 = 2. When n is a multiple of 2 (e.g. orange boxes)

every re-occurrence of a sequence item gets the same coefficient,

and cn has a chance of being nonzero. Otherwise

(e.g. green boxes) it is zero. The complex numbers in the matrix are

graphically represented as unit vectors. Each cn is the

weighted vector sum of the entries in the column above it, weights

coming from the fk column on the right, with

an overall factor of 1/![]() = 1/4.

The cn have been uniformly scaled to fit in the picture.

= 1/4.

The cn have been uniformly scaled to fit in the picture.

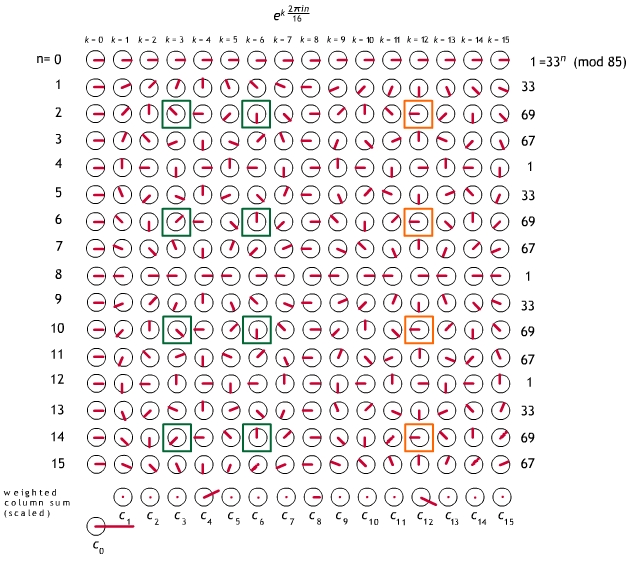

Fig. 2. Discrete Fourier Transform analysis of the sequence given by the first 16 powers of 33 (modulo 85). This sequence has period 4 and frequency 16/4=4. The non-zero cn only occur for n a multiple of 4. Graphic conventions are the same as in Fig. 1.

Why powers of 2?

I have chosen for illustration a number (85) which generates power residue sequences with periods which are powers of 2, and I have chosen to analyze a length (16) of sequence which is also a power of 2. The second choice is not immaterial: the Fast Fourier Transform we will use, and its quantum adaptation, both require it. The first choice is only for covenience in generating a small example. Shor shows that to get a reliable read on the power residue period in general, in the problem of factoring N, one must analyze sequences of a length 2n between N and N2. So to analyze a number like 85 realistically, we would have to work with sequences of length 128.

The Fast Fourier Transform which adapts directly to quantum computation is the Radix-2 Cooley-Tukey algorithm, invented in 1965 (reinvented, actually, since it later turned out to have been known to Gauss). To control the width of this presentation, I am going to work with the order-8 Discrete Fourier Transform, rather than order-16 as above. Taking ω = eiπ/4= 2–1/2(1 + i) as our primitive 8th root of 1, the 0-7th powers of ω are

or

1, ω, i, iω, –1, –ω, –i, –iω,

the numbers represented graphically above as

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() ,

,

![]() .

.

The transform c of an arbitrary length-8 sequence f = f0, f1, ..., f7, given by

c_n.jpg) ,

,

| 1 | 1 | 1 | 1 | 1 | 1 | 1 | 1 | f0 |

| 1 | ω | i | iω | –1 | –ω | –i | –iω | f1 |

| 1 | i | –1 | –i | 1 | i | –1 | –i | f2 |

| 1 | iω | –i | ω | –1 | –iω | i | –ω | f3 |

| 1 | –1 | 1 | –1 | 1 | –1 | 1 | –1 | f4 |

| 1 | –ω | i | –iω | –1 | ω | –i | iω | f5 |

| 1 | –i | –1 | i | 1 | –i | 1 | i | f6 |

| 1 | –iω | –i | –ω | –1 | iω | i | ω | f7 |

| c0 | c1 | c2 | c3 | c4 | c5 | c6 | c7 |

by setting the last row to be f0 times the

first plus ... plus f7 times the eighth, the

whole sum divided by ![]() . This

calculation would require eight multiplications and seven additions

for each item in c, or 120 operations in all. Working

with a sequence of length 2n, the number of operations

would be 2n (2n+1 – 1).

. This

calculation would require eight multiplications and seven additions

for each item in c, or 120 operations in all. Working

with a sequence of length 2n, the number of operations

would be 2n (2n+1 – 1).

Our Fast Fourier Transform leads in n steps from the elements f0, ... f2n–1 of the input sequence to the coefficients c0, ... c2n–1 of its Discrete Fourier Transform. We illustrate the process here for n = 3. Each column represents a successive step of the calculation.

|

Line 0 1 2 3 4 5 6 7 |

Start f7 f6 f5 f4 f3 f2 f1 f0 |

Step 1 f3+f7 f2+f6 f1+f5 f0+f4 f3–f7 f2–f6 f1–f5 f0–f4 |

Step 2 f1+f5 + f3+f7 f0+f4 + f2+f6 f1+f5 – f3–f7 f0+f4 – f2–f6 f1–f5 + i(f3–f7) f0–f4 + i(f2–f6) f1–f5 – i(f3–f7) f0–f4 – i(f2–f6) |

Step 3 [f0+f4 + f2+f6] + [f1+f5 + f3+f7] [f0+f4 + f2+f6] – [f1+f5 + f3+f7] [f0+f4 – f2–f6] + i[f1+f5 – f3–f7] [f0+f4 – f2–f6] – i[f1+f5 – f3–f7] [f0–f4 + i(f2–f6)] + ω[f1–f5 + i(f3–f7)] [f0–f4 + i(f2–f6)] – ω[f1–f5 + i(f3–f7)] [f0–f4 – i(f2–f6)] + iω[f1–f5 – i(f3–f7)] [f0–f4 – i(f2–f6)] – i ω[f1–f5 – i(f3–f7)] |

= = = = = = = = |

Notes:

The most transparent explanation I know uses properties of polynomial long division. Here I will specalize to the example at hand.

c_n.jpg) .

.

-ana.jpg)

we can understand Step 1 as transforming the degree-7 polynomial f into two degree-3 polynomials (red boxes) each of which can produce, via its remainders, half of the cn. Then Step 2 transforms each of the degree-3 polynomials into two degree-1 polynomials (green boxes), each of which can produce a quarter of the cn, and Step 3 transforms each of the degree-1 polynomials into two degree-0 polynomials, which are their own remainders.

Continued in next month's Feature Column.

For the Fast Fourier Transform, I have followed Henry Laufer's text Discrete Mathematics and Applied Modern Algebra, Prindle, Weber & Schmidt, Boston 1984.

The Wikipedia entry on Fast Fourier Transform gives an alternative explanation; it also provides references to Cooley and Tukey's 1965 paper as well as to the spot in Gauss' Nachlass where he develops the technique, both items in its extensive bibliography on the ancient and recent history of the algorithm.

References on the "Quantum Fourier Transform" and on Shor's Algorithm:

D. Coppersmith, An Approximate Fourier Transform Useful in Quantum Factoring, IBM Research Report 07/12/94, available as arXiv:quant-ph/0201067.

A. Ekert and R. Jozsa, Quantum computation and Shor's factoring algorithm, Reviews of Modern Physics 68 (1996) 733-753

Peter W. Shor, Algorithms for Quantum Computation: In: Proceedings, 35th Annual Symposium on Foundations of Computer Science, Santa Fe, NM, November 20--22, 1994, IEEE Computer Society Press, pp. 124--134.

An

expanded version is available, under the title

Polynomial-Time Algorithms for Prime Factorization

and Discrete Logarithms on a Quantum Computer, as

arXiv:quant-ph/9508027.